|

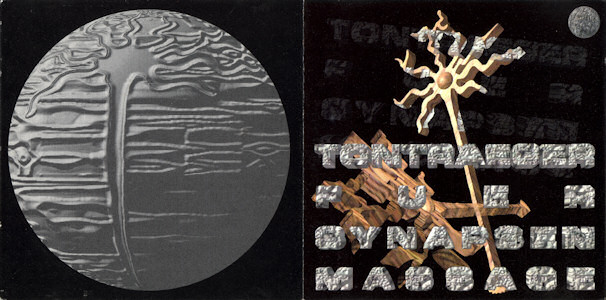

And when the team hooked XDREAM up to another of the monkey’s visual neurons, it produced a distorted image of a face in a white mask. Evolving images for visual neurons using a deep generative network reveals coding principles and neuronal preferences. Diane is one of the monkeys’ caretakers, who feeds them while wearing blue scrubs and a white face mask. “And then, a few days later, we evolved Diane,” she adds. “We all looked at it and said, ‘Oh, that’s Anthony,’” says Margaret Livingstone, a neuroscientist at Harvard Medical School. Soon a red patch appeared next to it, which reminded the watching researchers of the red collar worn by a monkey who lives in the cage opposite Ringo’s. Two black dots with a black line beneath them, all against a pale oval. But as time passed, “from this haze, something started staring back at us,” says the neuroscientist Carlos Ponce. As the images evolved, the neuron fired away, and the team behind XDREAM watched from a nearby room.Īt first, the pictures were gray and formless. The pictures were created by an artificial-intelligence algorithm called XDREAM, which gradually tweaked them to stimulate one particular neuron in Ringo’s brain, in a region that’s supposedly specialized for recognizing faces. In April 2018, a monkey named Ringo sat in a Harvard lab, sipping juice, while strange images flickered in front of his eyes. The article is really interesting, here’s the setup: The open and solid violins show the distributions, in the first and last generation respectively, of relative activation over 100 units in each layer.An Atlantic article describing the work is here. Random initialization is shown for comparison. Left to right within the opt and ivt groups are results from initialization with the worst, middle, and best 20 images. The images were converted to the image code space using either an optimization method (“opt”) or an inversion method (“ivt” Methods). For each target unit, its best, middle, or worst 20 images from ImageNet were used as the initial generation. Activation values are shown above each image.

c), Optimized images from different initializations for 3 example units in the output layer (one unit per row). Right, activation normalized to the endpoints (location 0 or 1), highlighting the change in activation away from the endpoints. b), Left, relative activation in response to images interpolated (in the code space) between two optimized images from two different random initial conditions. a), Distributions of fractional change in optimized activation if 10 different random initializations are used. XDream is implemented in Python, released under the MIT License, and works on Linux, Windows, and MacOS.Ī,b,c), Effect of using different random initializations. Overall, XDream is an efficient, general, and robust algorithm for uncovering neuronal tuning preferences using a vast and diverse stimulus space. These results establish expectations and provide practical recommendations for using XDream to investigate neural coding in biological preparations. Lastly, we found no significant advantage to problem-specific parameter tuning. Furthermore, XDream is robust to choices of multiple image generators, optimization algorithms, and hyperparameters, suggesting that its performance is locally near-optimal. The work appears May 2 in the journal Cell. As the images evolved, they started to look like distorted versions of real-world stimuli.

XDream extrapolates to different layers, architectures, and developmental regimes, performing better than brute-force search, and often better than exhaustive sampling of >1 million images. To find out which sights specific neurons in monkeys 'like' best, researchers designed an algorithm, called XDREAM, that generated images that made neurons fire more than any natural images the researchers tested. XDream can efficiently find preferred features for visual units without any prior knowledge about them. We also explored design and parameter choices. We evaluated how the method compares to brute-force search, and how well the method generalizes to different neurons and processing stages. We use ConvNet units as in silico models of neurons, enabling experiments that would be prohibitive with biological neurons. Here we extensively and systematically evaluate the performance of XDream. A new method termed XDream (EXtending DeepDream with real-time evolution for activation maximization) combined a generative neural network and a genetic algorithm in a closed loop to create strong stimuli for neurons in the macaque visual cortex. The characterization of effective stimuli has traditionally been based on a combination of intuition, insights from previous studies, and luck. A longstanding question in sensory neuroscience is what types of stimuli drive neurons to fire.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed